How intelligent should the AI be

Planted February 14, 2012

Reading and thinking the last days about how to implement an intelligent system to play Starcraft, I had time to think about the implications of considering a system “intelligent”.

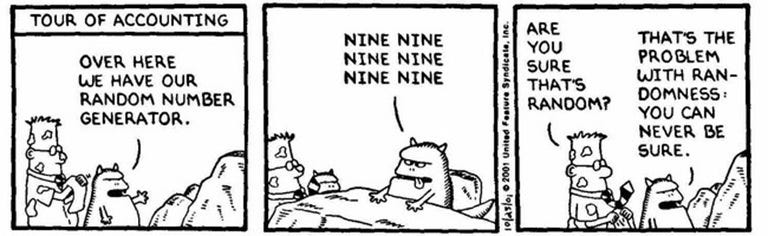

Nowadays, we can develop systems that are able to defeat human intelligence in certain genres. Some board games like chess, or most of the shooters are composed of a limited set of rules, that can be easily modeled and represented with different combinations of techniques (an expert system, considering most of the rules for almost all the situations, is a typical choice). As my colleague Bruno points out (Spanish link), we even have to limit the intelligence of those systems by applying “stupidifying” techniques. In one of the examples Bruno provides, we only allow the AI to attack the human player after spotting him; leading to very weird situations like the one exposed in the following video

But this does not apply to strategy games. Generally, a human player has much more capacity for analysis and improvisation in complex systems like strategy games, so the only way to empower the AI is to make it able to cheat (whether in the form of direct benefits, or having access to information that the human player can’t access in the same situation). The approach of expert systems, which has been proven to work well in most of the scenarios, can’t be applied here: implementing all the possible rules for one single (and simple) situation is an overwhelming task. Cheating AI is a well-known aspect of Sid Meier’s Civilization series; in those games, the player must build his empire from scratch, while the computer’s empire receives additional units at no cost and is freed from most resource restrictions.

How to solve this? Well, one idea could be to apply learning techniques. In the last AIIDE competition, the Berkeley team designed a special training field, aptly named Valhalla. Instead of manually adjusting the parameters of the AI, they let the AI fight on its own for a high number of iterations, letting it analyze its own results and adjusting the parameters in its most convenient way. The result can be seen in the following video: they trained a group of mutalisks to massacre their natural counter unit, something unlikely if a human were controlling the units.

But we are still far away from reaching an AI that can defeat humans in complex scenarios. Although it has its limitations, Moore’s law is helping us, but we are not only facing a problem of computing capabilities. We also need to find different ways to model complex scenarios that the human mind is good at analyzing, but the computer is still behind us. And if we finally use cheating to defeat a human intelligence, we have to make it completely transparent: the user will not care as long as beating the AI is still challenging for him.